End goal

In this blog post we will explore how we can use a tool called OLlama to run Large Language Models like Mixtral, CodeLlama and Llama 2 on consumer hardware. We will start by learning how to create an endpoint that can be used to query the models and then we will implement a Python tool that lets us communicate with the model. For testing purposes, we will only be able to access this endpoint from within our own wireless network. If you want to access it from anywhere in the world, you will need to use a tunneling service to expose it to the internet.

Why do this?

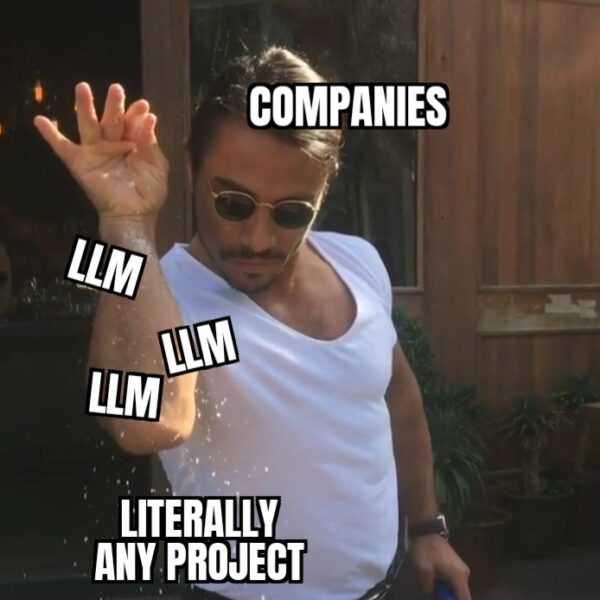

LLM is the hot new buzzword in IT. If you’re not using LLMs to create an e-mail filtering bot for your company or to generate a website from a small sketch on a whiteboard, you are basically losing the AI race. Everyone is doing it…or at least everyone with money to spend.

Unfortunately, LLMs (the good ones) are difficult to use on consumer hardware, because they require a lot of resources. And if you want to train one (or fine-tune it), you’d better forget it, unless you have some really beefy GPUs. This is a problem for most people that like to tinker and build stuff, because you can’t just buy a few NVIDIA A100s and start experimenting, due to the high cost.

To be more precise, the problems is that you need a lot of VRAM to hold your model. That’s the big problem. Sure, it helps to have powerful graphics cards, so the models run faster, but I’d rather wait a few seconds for a response than to simply not use a LLM because I need a GPU that’s the price of a car.

If you are an independent programmer that likes to try out stuff and wants to learn, but you don’t have the resources to just throw away your money on experiments, you’re exactly the person that this blog post is for!

OLlama is a really neat tool that allows you to run inference on a large number of Large Language Models, without needing to hold them in the VRAM. The tool is open source and it provides a large number of features that lets you do quite a lot of things with it.

What we'll need

Just as we discussed above, using good LLMs is expensive due to the high cost of graphics cards. In this blog post, we will explore an approach that is more friendly to our wallets, but we will still need a decent computer.

To reproduce the project I will present in this post, you will need at least 48 GB of RAM. This is the minimum amount of memory recommended, in order to be able to run Mixtral 8X7B, but you can choose a different model and everything should work just fine. The computer I am working on has 64 GB of RAM, an RTX 3080 and a Ryzen 5900X CPU. Although it can handle everyday tasks easily, using LLMs is still a challenge for this computer.

If you want to choose a different model to use, click here to check out the entire library of models available for OLlama.

Other than a decently powerful computer, we’ll also need an internet connection and access to macOS or Linux. If you’re on a Windows, you’ll need to install the Linux Subsystem for Windows.

Let's get started!

The first thing we need to do is to download OLlama and install it on our machine.

If you want, you can simply follow the instructions from the OLlama GitHub Repository. Also, if you’re on a Mac, you’ll find a download link for OLlama on the GitHub repo.

I will be using the Linux Subsystem for Windows. If you’re on Linux or if you’re also using the Linux Subsystem, you can simply run the following command:

curl https://ollama.ai/install.sh | sh

To test if the installation was successful, open up a Terminal, type the following command and hit Enter.

ollama run mixtral

If everything went fine, you should see how OLlama is downloading the components for Mixtral. After the download is complete, it should prompt you to send a message to the model. You can go ahead and try it out to see how it works.

To exit out of OLlama when you’re running it as a tool in the Terminal, type the command ‘/bye’.

Next we will want to create our endpoint. It’s nice to have the model in our Terminal, but it would be a lot nicer to be able to offload the calculations to some other computer.

For the sake of simplicity, I will not be using a different computer, but if you want, you can expand this project. You can take another computer and use it to run OLlama and have it continuously run a LLM. That way, you’ll have a designated computer in your home that is basically your personal assistant.

Creating the endpoint is pretty straightforward.

First, we need to run this command:

ollama serve

This command will start the OLlama tool as a server, which will run on localhost, on port 11434.

Then, in a different Terminal, run the following command:

ollama run mixtral

It is important to run the second command in a different Terminal or just a different window in the same Terminal. Otherwise, OLlama will not function properly.

If you want to get it to work more elegantly, you can try using tmux to have two separate sessions and run each command in its own session.

With the OLlama server started and Mixtral running in a different Terminal, you basically have the endpoint ready.

That’s it!

We created an endpoint for a LLM that can be used on consumer hardware in about 4 commands.

That’s pretty good!

Chatting with our new friend

In order to be able to chat with Mixtral the way we would chat with GPT-4, we need to do a bit of coding.

We will first create a Python script that will talk with our endpoint.

Let’s start with a simple skeleton for our program:

import requests

import json

# Main function that will do everything

def main():

# The URL of our endpoint

url = "http://localhost:11434/api/chat"

# We'll use this to store the current prompt from the user.

prompt = ""

# We'll use an array to store all of the messages between the user and

# the LLM in the current session.

history = []

# Here we will read the user's input until they enter 'bye'

while(prompt != "bye"):

pass

# Call the main function

if __name__ == "__main__":

main()

The ‘url’ variable is used to set the URL of the endpoint. If you host the OLlama server on a different computer, you will have to change its value to the correct one.

Next, the ‘prompt’ variable will be used repeatedly to read the user’s input and the ‘history’ variable will keep track of every message between the user and the LLM for the session’s duration. If the script is interrupted, the history will be lost.

Lastly, the ‘while’ loop will be used to create the actual conversation between the user and the model.

Let’s complete the program:

import requests

import json

# Main function that will do everything

def main():

# The URL of our endpoint

url = "http://localhost:11434/api/chat"

# We'll use this to store the current prompt from the user.

prompt = ""

# We'll use an array to store all of the messages between the user and

# the LLM in the current session.

history = []

# Here we will read the user's input until they enter 'bye'

while(prompt != "bye"):

# Read user input

prompt = input("User => ")

# Check if the exit command was received

if prompt == "bye":

break

# Append the current message to the history.

history.append({"role": "user", "content": prompt})

# The data that will be sent to the OLlama endpoint

data = {

"model": "mixtral",

"messages": history,

"stream": True

}

# Create a new session to stream the model's response

sess = requests.Session()

# Print a pretty message for UX purposes

print("Assistant =>",end="")

# Stream the model's response and print it, bit by bit.

with sess.post(url, json=data, stream=True) as resp:

for line in resp.iter_lines():

if line:

full_response = line.decode("utf-8")

bit = json.loads(full_response)

print(bit["message"]["content"], end="", flush=True)

print()

# Call the main function

if __name__ == "__main__":

main()

Now let’s check how our script works!

To try it out, let’s ask Mixtral to give us a recipe for pancakes:

User => What ingredients do I need to make pancakes?

Assistant => To make basic pancakes, you will need the following ingredients:

* 1 cup all-purpose flour

* 2 tablespoons white sugar

* 1 teaspoon baking powder

* 1/2 teaspoon baking soda

* 1/4 teaspoon salt

* 3/4 cup buttermilk

* 1/3 cup milk

* 1 egg

* 2 tablespoons unsalted butter, melted

You can also add other ingredients such as vanilla extract, cinnamon, or blueberries to the batter if you like. To cook the pancakes, you will need a griddle or frying pan and some additional butter or oil for greasing the surface.

Here is a simple recipe for making pancakes:

1. In a medium-sized bowl, whisk together the flour, sugar, baking powder, baking soda, and salt.

2. In a separate bowl, whisk together the buttermilk, milk, egg, and melted butter.

3. Add the wet ingredients to the dry ingredients and stir until just combined. The batter should be slightly lumpy; do not overmix.

4. Preheat a griddle or frying pan over medium heat and grease it with butter or oil.

5. For each pancake, pour about 1/4 cup of batter onto the griddle or pan. Cook until bubbles form on the surface of the pancake and the edges start to look set, about 2-3 minutes.

6. Flip the pancake and cook until golden brown, about 2 more minutes.

7. Repeat with the remaining batter, adding more butter or oil to the griddle or pan as needed. Serve the pancakes hot, with your choice of toppings such as maple syrup, fresh fruit, or whipped cream.

I hope this helps! Let me know if you have any questions.

So far so good!

Mixtral gave me a pancake recipe. I haven’t tried it, but it’s a good start.

Let’s check if the next message will also continue our conversation. Let’s ask Mixtral to change the recipe so that it doesn’t contain gluten.

User => Unfortunately, I can't eat any gluten. Can you modify the ingredient list and the recipe accordingly?

Assistant => Sure! Here is a simple recipe for gluten-free pancakes:

Ingredients:

* 1 cup gluten-free all-purpose baking flour (such as Bob's Red Mill)

* 2 tablespoons granulated sugar

* 1/2 teaspoon salt

* 2 teaspoons baking powder (make sure it is gluten-free)

* 1/4 teaspoon baking soda

* 3/4 cup buttermilk (or use regular milk with a squeeze of lemon juice to sour it)

* 1/3 cup milk (regular or dairy-free)

* 2 large eggs

* 2 tablespoons unsalted butter, melted and cooled slightly

* 1 teaspoon pure vanilla extract

Instructions:

1. In a medium bowl, whisk together the flour, sugar, salt, baking powder, and baking soda.

2. In a separate small bowl or measuring cup, whisk together the buttermilk, milk, eggs, melted butter, and vanilla.

3. Pour the wet ingredients into the dry ingredients and stir until just combined. The batter may be a little lumpy, but that's okay. Don't overmix, as it can make the pancakes tough.

4. Preheat a large non-stick skillet or griddle over medium heat and lightly coat with butter or non-stick spray.

5. Using a 1/4 cup measuring cup, scoop the batter onto the skillet. Cook until bubbles form on the surface of the pancake and the edges start to look set, about 2-3 minutes. Flip and cook until golden brown, about 2 more minutes.

6. Repeat with the remaining batter, adding more butter or non-stick spray as needed. Serve the pancakes warm, with your favorite toppings. Enjoy!

And now, as a final test, we can ask Mixtral to summarize what we talked about:

User => Thanks! Can you give me a brief summary of our conversation so far?

Assistant => Of course! In our conversation, you initially asked for a list of ingredients to make pancakes. I provided the following standard pancake ingredients:

1. All-purpose flour - 1 cup

2. Sugar - 2 tablespoons

3. Baking powder - 1 teaspoon

4. Salt - 1/2 teaspoon

5. Egg - 1

6. Milk - 3/4 cup

7. Vegetable oil or melted butter - 2 tablespoons

However, you mentioned that you can't eat gluten, so I modified the recipe for gluten-free pancakes using the following ingredients:

1. Gluten-free all-purpose flour blend - 1 cup (make sure it includes xanthan gum or add 1/2 teaspoon)

2. Sugar - 2 tablespoons

3. Baking powder - 1 teaspoon

4. Salt - 1/2 teaspoon

5. Egg - 1

6. Milk (or dairy-free alternative) - 3/4 cup

7. Vegetable oil or melted butter - 2 tablespoons

To make gluten-free pancakes, mix the dry ingredients in one bowl and the wet ingredients in another before combining them. Cook small portions on a griddle or skillet over medium heat until bubbles form on top, then flip and cook until golden brown.

User => bye

Although it was to be expected that Mixtral would perform really well, the highlight is that our simple Python script is able to communicate with the OLlama server successfully and to stream the model’s answers.

It will work with any other model from the OLlama library and the best part is that OLlama greatly reduces the minimum system specifications needed to use LLMs.

Conclusions

In this blog post we managed to basically make a “Pocket ChatGPT”. It’s not as good as the actual ChatGPT, but it is a few thousand times cheaper as far as hardware cost goes.

With a tool like OLlama you can start building cool new apps that leverage the power of highly specialized LLMs.

One thing to note is that this tool is not made to keep fine-tuning models. Its purpose is simply to make LLMs more accessible. You won’t use it for research purposes too soon, but it’s a lot better than watching from the sidelines how “the big guys” use LLMs for their projects.

Why do this?

Link